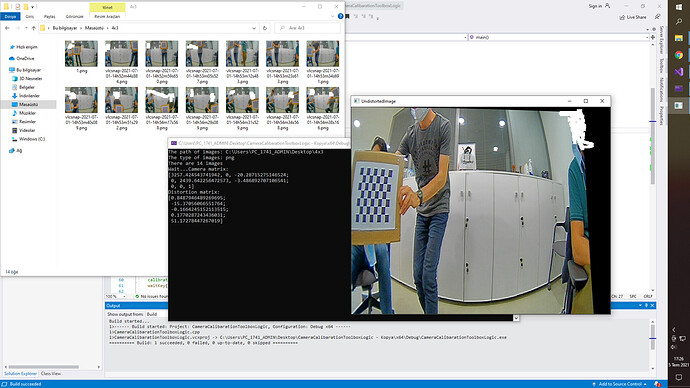

I’m making a camera calibration toolkit with OpenCV and C++. I’m “using calibrateCamera” and “undistort” functions from opencv2/calib3d. I get good results for two image set but I get terrible result for 5 image set. All image sets are good and has enough picture to calibrate camera.

For examle when I try my code with that image set I can find and draw corners of chessboard correctly but the other stuff are wrong. Distortion coefs are not true, the optical center of camera is negative so undistortion is weird.

What do you think about that?

taking all pictures together, the camera view’s corners have to be covered with points. lens distortion as well as "un"distortion have the most severe effect there, need values there.

single board views should to cover much of the view, a lot more than your pictures show

it’s best to hold your board at an angle, i.e. corners pointing up/down/left/right. the board is symmetric. the order of points can flip. use drawChessboardCorners and observe the order of the points in realtime.

I’d recommend prototyping in Python. C++ is a low level language.

was my comment above not to your liking?

When I look at your image folder, the chess board is too small compared to the image. And the panel exists only in the outer part of the image. Use panel positions in various conditions.