Hi guys,

I have gone through the process on installing CVAT on my local machine and trained on small set of house images with gutters, downspouts & waste pipes. I then exported to Yolo1.1 ( the only Yolo option in CVAT) which gave me a folder with a text file for each image. eg

2 0.199117 0.174856 0.395400 0.045975

2 0.670996 0.250719 0.230192 0.114938

1 0.410612 0.373634 0.058242 0.747269

0 0.530154 0.380531 0.082758 0.761062

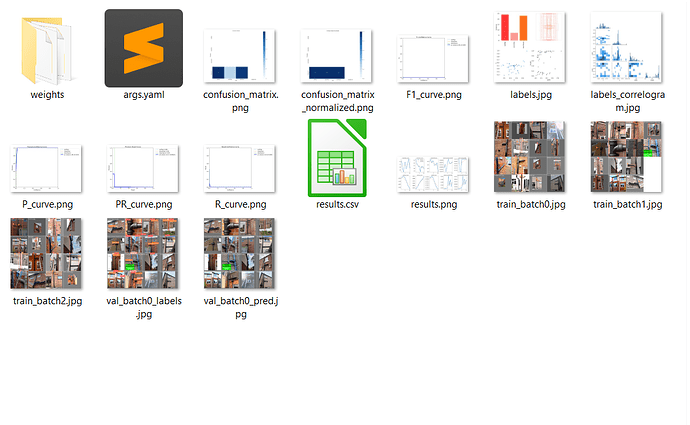

I followed a tutorial that gave me the following python code and produced a train folder(see image) and a weights folder

with best.pt and last.pt.

code

from ultralytics import YOLO

# Load a model

model = YOLO("yolov8n.yaml")

model.train(data="config.yaml", epochs=10)

config.yaml

path: D:/techy/Vision/gutters/train

train: images/train

val : images/train

names:

0: soilPipe

1: wastePipe

2: downSpout

I have a python that works with mp4 files and detects other objects when I use ‘yolov8x-oiv7.pt’

import cv2

from ultralytics import YOLO

def process_frame(frame, model):

results = model(frame)

if results:

for result in results:

if hasattr(result, 'boxes') and len(result.boxes) > 0:

for box in result.boxes:

x1, y1, x2, y2 = box.xyxy[0].cpu().numpy()

conf = box.conf.cpu().numpy()[0]

cls_id = box.cls.cpu().numpy()[0]

label = f"{result.names[int(cls_id)]}: {conf:.2f}"

(text_width, text_height), _ = cv2.getTextSize(label, cv2.FONT_HERSHEY_SIMPLEX, 0.5, 1)

cv2.rectangle(frame, (int(x1), int(y1) - 10 - text_height), (int(x1) + text_width, int(y1)), (255, 255, 255), cv2.FILLED)

cv2.putText(frame, label, (int(x1), int(y1) - 10), cv2.FONT_HERSHEY_SIMPLEX, 0.5, (0, 0, 0), 1)

cv2.rectangle(frame, (int(x1), int(y1)), (int(x2), int(y2)), (0, 255, 0), 2)

return frame

def main():

#model = YOLO('best.pt') # Load your model

model = YOLO('yolov8x-oiv7.pt')

video_path = 'soilpipe4.mp4' # Path to your video file

cap = cv2.VideoCapture(video_path) # Open the video file

if not cap.isOpened():

print("Error: Failed to open video file.")

return

while True:

ret, frame = cap.read()

if not ret:

print("End of video file reached.")

break

frame = process_frame(frame, model)

# Display the processed frame

cv2.imshow("YOLOv8 Object Detection - Video", frame)

if cv2.waitKey(1) & 0xFF == ord('q'): # Press 'q' to quit

break

cap.release()

cv2.destroyAllWindows()

if __name__ == "__main__":

main()

When I use best.pt I get no results. I changed the script to loop through the original images folders but got no detections.

I would presume that I would get something back.

Two thoughts.

- I have missed a step somewhere.

- The best.pt file is a different version that ( from ultralytics import YOLO).

I can’t find a python script that converts from version Yolo 1.1 to 8.

Be gentle with me and answer at idiot level please.